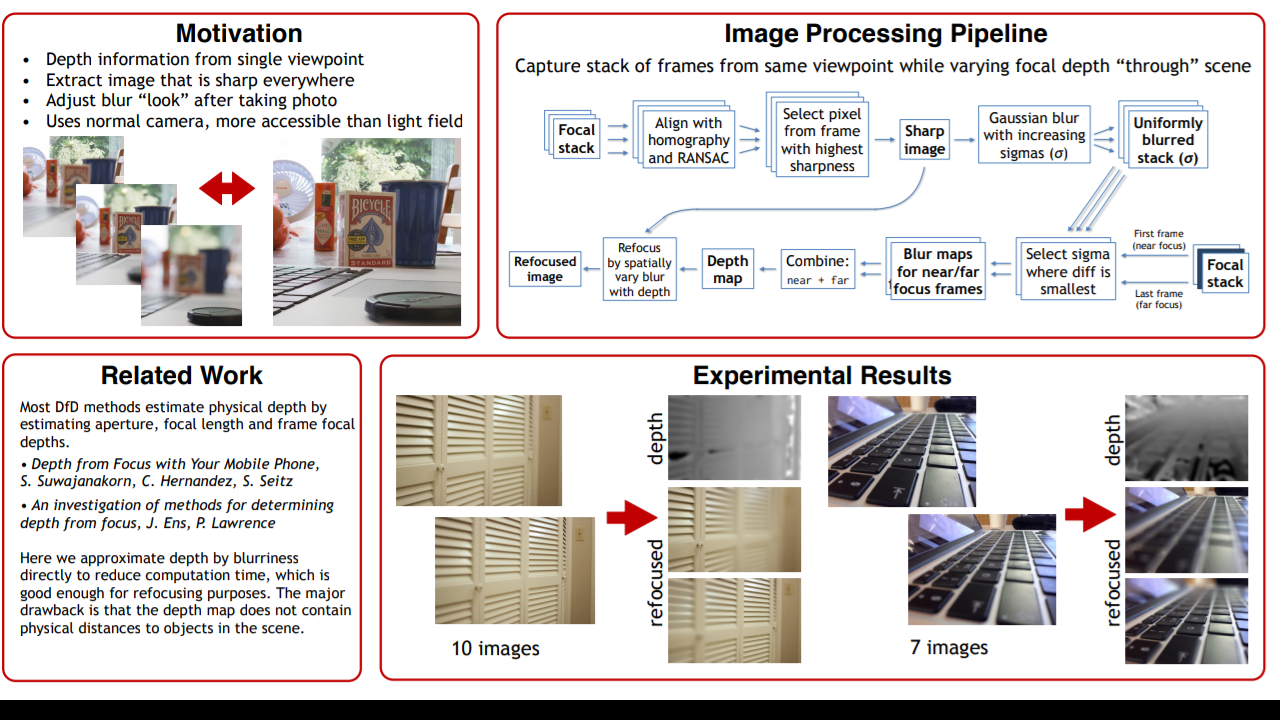

Depth from Defocus for Mobile Cameras

Depth from De-focus for Mobile phone camera Output OVERVIEW Depth from Defocus (DFD) is a technique in which a depth image of a scene is reconstructed from multiple images with varying camera parameters from a single camera [1]. Parameters that affect defocus characteristics of an image are; distance to the focus plane, the focal length, and the depth of field which is controlled by the aperture size. I would like to explore and implement DFD methods on smartphones [2]. My aim is to display a captured scene with a some kind of 3D technique, i.e. parallax mapping. A use for this could be simple capturing of 3D photos of people or of sculptured art. Technical Details In the first stage I will implement a DFD method in MATLAB with image stacks taken by a stationary DSLR camera, i.e. no translation or parallax between images. This will give me a solid understanding of the mathematics behind the optics and the algorithms. In the second stage I will extend the above me...